The point was that we utilized the chunk and pull strategy to pull the data separately by logical units and building a model on each chunk. So these models (again) are a little better than random chance. Rsample::initial_split(prop = 0.9, strata = "is_delayed") %>% outputs the out-of-sample AUROC (a common measure of model quality)ĭplyr::filter(carrier = carrier_name) %>%.I’m going to start by just getting the complete list of the carriers. I’m going to separately pull the data in by carrier and run the model on each carrier’s data. This is exactly the kind of use case that’s ideal for chunk and pull. In this case, I want to build another model of on-time arrival, but I want to do it per-carrier. After I’m happy with this model, I could pull down a larger sample or even the entire data set if it’s feasible, or do something with the model from the sample. Including sampling time, this took my laptop less than 10 seconds to run, making it easy to iterate quickly as I want to improve the model.

I built a model on a small subset of a big data set. mod <- glm(is_delayed ~ carrier +ĭf_test$pred <- predict(mod, newdata = df_test)Īuc <- suppressMessages(pROC::auc(df_test$is_delayed, df_test$pred))Īs you can see, this is not a great model and any modelers reading this will have many ideas of how to improve what I’ve done.

Now let’s build a model – let’s see if we can predict whether there will be a delay or not by the combination of the carrier, the month of the flight, and the time of day of the flight. # Take first 20K for each class for training setĬount(df_test, is_delayed) # A tibble: 2 x 2 # Assign random rank (using random and row_number from postgres) These classes are reasonably well balanced, but since I’m going to be using logistic regression, I’m going to load a perfectly balanced sample of 40,000 data points.įor most databases, random sampling methods don’t work super smoothly with R, so I can’t use dplyr::sample_n or dplyr::sample_frac. # Remove small carriers that make modeling difficultįilter(!is.na(is_delayed) & !carrier %in% c("OO", "HA"))ĭf %>% count(is_delayed) # A tibble: 2 x 2 # Get just hour (currently formatted so 6 pm = 1800) Let’s start with some minor cleaning of the data # Create is_delayed column in database This is a great problem to sample and model.

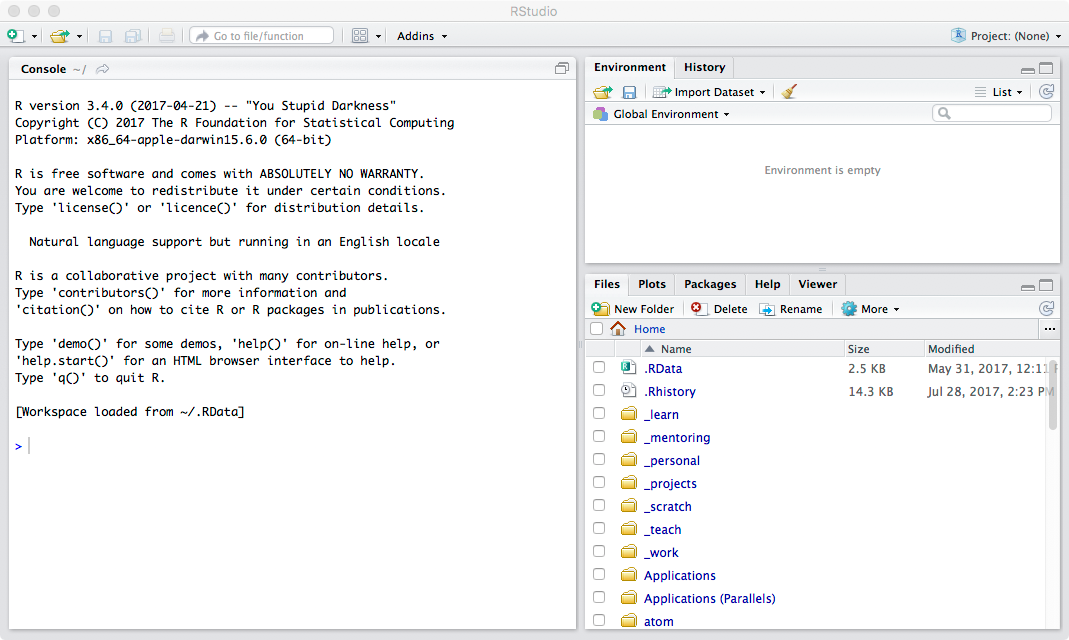

Let’s say I want to model whether flights will be delayed or not. With only a few hundred thousand rows, this example isn’t close to the kind of big data that really requires a Big Data strategy, but it’s rich enough to demonstrate on. I’m using a config file here to connect to the database, one of RStudio’s recommended database connection methods: library(DBI) Let’s start by connecting to the database. I’ve preloaded the flights data set from the nycflights13 package into a PostgreSQL database, which I’ll use for these examples. And, it important to note that these strategies aren’t mutually exclusive – they can be combined as you see fit! In this post, I’ll share three strategies. Nevertheless, there are effective methods for working with big data in R. 1 This is an especially big problem early in developing a model or analytical project, when data might have to be pulled repeatedly. For example, the time it takes to make a call over the internet from San Francisco to New York City takes over 4 times longer than reading from a standard hard drive and over 200 times longer than reading from a solid state hard drive. Because you’re actually doing something with the data, a good rule of thumb is that your machine needs 2-3x the RAM of the size of your data.Īn other big issue for doing Big Data work in R is that data transfer speeds are extremely slow relative to the time it takes to actually do data processing once the data has transferred. The data has to fit into the RAM on your machine, and it’s not even 1:1. The fact that R runs on in-memory data is the biggest issue that you face when trying to use Big Data in R. But this is still a real problem for almost any data set that could really be called big data. Hardware advances have made this less of a problem for many users since these days, most laptops come with at least 4-8Gb of memory, and you can get instances on any major cloud provider with terabytes of RAM. In this article, I’ll share three strategies for thinking about how to use big data in R, as well as some examples of how to execute each of them.īy default R runs only on data that can fit into your computer’s memory. In fact, many people (wrongly) believe that R just doesn’t work very well for big data. For many R users, it’s obvious why you’d want to use R with big data, but not so obvious how.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed